Covalent version 0.106.0 is now live

Summary

The latest release of Covalent is now out and available for community use. It comes with new features, including support for local execution of workflows with a Dask plugin, remote execution of workflows with Slurm and SSH plugins, and new updates to the user interface. A summary of the feature releases is provided below:

- Support for workflow execution on a local Dask cluster is now available in Covalent;

- Support for workflow execution on remote machines with SSH access and Slurm;

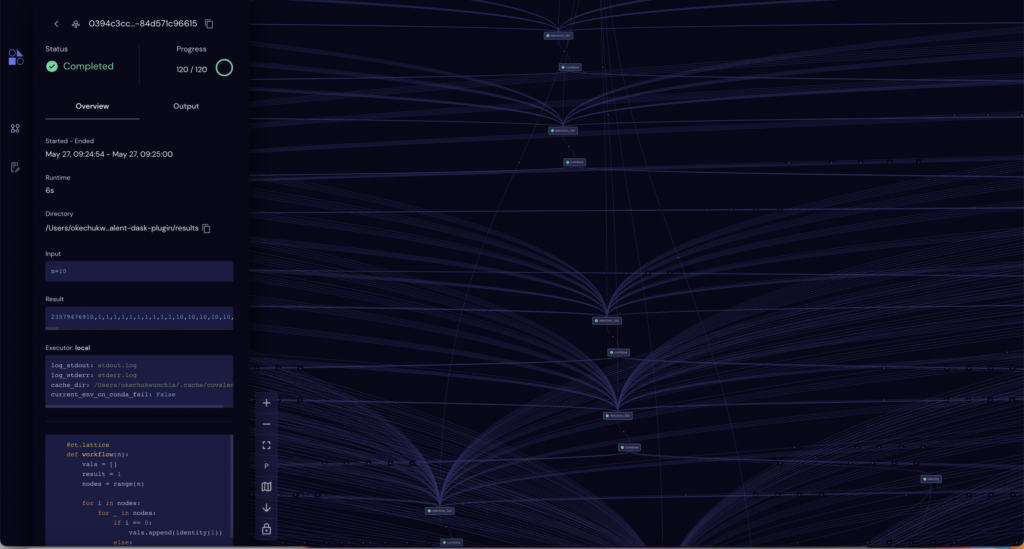

- The UI now includes a revamp in the color, theme, workflow graph and other visual elements.

Check out the summary table below for the list of added features and read the corresponding subsections to find out more.

| Feature | Summary |

|---|---|

| Dask plugin | plugin that interfaces Covalent with a Dask Cluster |

| SSH plugin | plugin that interfaces Covalent with other machines accessible to the user over SSH |

| Slurm plugin | plugin that interfaces Covalent with HPC systems managed by Slurm |

🧑💻 New Executors

As a fundamental principle of Covalent, we want things to be as modular as possible. This made us design executers – modular blocks of plugins that dictates and controls the choice of hardware resource your task is being run on. Being an open-source team, we made it extremely easy for users to construct custom executers based on the template we have released. Using the same template, we are releasing three new executers – DaskExecuter, SlurmExecuter , SSHExecuter.

🔌 Execution on a remote machine via SSH

Have you ever wondered if you can do a hybrid experiment between a RasberryPi and quantum computer? After a quick pip install covalent-ssh-plugin, one gets the ability to interface Covalent with any machine accessible via SSH. This plugin can distribute tasks to one or more compute backends that are not controlled by a cluster management system, such as computers on a LAN, or even a collection of small-form-factor Linux-based devices such as Raspberry Pis, NVIDIA Jetsons, or Xeon Phi co-processors.

In order to use the SSH executor plugin, the user has to install the plugin with pip:

pip install covalent-ssh-pluginThe following shows an example of how a user might modify their Covalent configuration to support this plugin:

[executors.ssh]

username = "user"

hostname = "host.hostname.org"

remote_dir = "/home/user/.cache/covalent"

ssh_key_file = "/home/user/.ssh/id_rsa"This setup assumes the user has the ability to connect to the remote machine using ssh -i /home/user/.ssh/id_rsa user@host.hostname.org and has write-permissions on the remote directory /home/user/.cache/covalent (if it exists) or the closest parent directory (if it does not).

The user can decorate an electron within a workflow by passing “ssh” as the electron’s executor argument

import covalent as ct

@ct.electron(executor="ssh")

def my_task():

import socket

return socket.gethostname()Alternatively, the user can declare a class object to customize behavior within particular tasks:

from covalent.executor import SSHExecutor

executor = SSHExecutor(

username="user",

hostname="host2.hostname.org",

remote_dir="/tmp/covalent",

ssh_key_file="/home/user/.ssh/host2/id_rsa",

)

@ct.electron(executor=executor)

def my_custom_task(x, y):

return x + yThe user may now execute the electron on the remote machine. Check out the Covalent SSH plugin repo to learn more – https://github.com/AgnostiqHQ/covalent-ssh-plugin

💻 Execution on a Local Dask Cluster

In previous releases, Covalent workflows could be dispatched for execution on the host machine. Starting with release v106.0, a Dask executor plugin interfaces with a running Dask Cluster allowing users to deploy tasks to the cluster by providing the scheduler address to the executor object. A user can customize the execution of electrons within a workflow by specifying the scheduler address of the local Dask cluster as the electron’s executor.

In order to dispatch workflows to a Dask cluster, the user has to install the Dask plugin using pip:

pip install covalent-dask-pluginAfter installing the Dask plugin, the following command should be run to start a Dask cluster with Python and retrieve the scheduler address:

from dask.distributed import LocalCluster

cluster = LocalCluster()

print(cluster.scheduler_address)The local Dask cluster’s scheduler address looks like tcp://127.0.0.1:59183. Note that the Dask cluster does not persist when the process terminates.

This cluster can be used with Covalent by providing the scheduler address:

from covalent.executor import DaskExecutor

dask_executor = DaskExecutor(scheduler_address=cluster.scheduler_address)

@ct.electron(executor=dask_executor)

def my_custom_task(x, y):

return x + yA workflow containing the electron can be constructed and dispatched as usual. This will execute the workflow’s electron on the local Dask cluster:

@ct.lattice

def workflow(y,z):

value = my_custom_task(y, z)

return value

dispatch_id = ct.dispatch(workflow)(1, 2)After executing the electron, the results of the workflow can be retrieved as usual:

result = ct.get_result(dispatch_id=dispatch_id)Note: Instead of having independent executors for each electron, if you are using the same executor for the entire workflow, executors can be defined at the lattice level with electrons simply having a @ct.electron decorator. For example,

@ct.lattice(executor=dask_executor)

def workflow(y,z):

....

will make dask_executor the default for all electrons inside workflow

Check out the Dask plugin repo to learn more – https://github.com/AgnostiqHQ/covalent-dask-plugin

🖥️ Execution on a Slurm machine

We are excited to announce Covalent’s support for Slurm – perhaps the most popular open source high-performance cluster job management system. This executor plugin interfaces Covalent with HPC systems managed by Slurm. For workflows to be deployable, users must have SSH access to the Slurm login node, writable storage space on the remote filesystem, and permissions to submit jobs to Slurm.

In order to use the Slurm plugin, simply install the plugin with pip:

pip install covalent-slurm-pluginThe following snippet illustrates how a user might modify their Covalent configuration to support Slurm:

[executors.slurm]

username = "user"

address = "login.cluster.org"

ssh_key_file = "/home/user/.ssh/id_rsa"

remote_workdir = "/scratch/user"

cache_dir = "/tmp/covalent"

conda_env = ""

[executors.slurm.options]

partition = "general"

cpus-per-task = 4

gres = "gpu:v100:4"

exclusive = ""

parsable = ""The first block describes default connection parameters for a user who is able to successfully connect to the Slurm login node using ssh -i /home/user/.ssh/id_rsa user@login.cluster.org. The second block describes default parameters which are used to construct a Slurm submit script. In this example, the submit script would contain the following preamble:

#!/bin/bash

#SBATCH --partition=general

#SBATCH --cpus-per-task=4

#SBATCH --gres=gpu:v100:4

#SBATCH --exclusive

#SBATCH --parsable

The user can decorate an electron in a workflow using the above settings:

import covalent as ct

@ct.electron(executor="slurm")

def my_task(x, y):

return x + yAlternatively, by using a class object to customize behavior scoped to specific tasks:

from covalent.executor import SlurmExecutor

executor = SlurmExecutor(

remote_workdir="/scratch/user/experiment1",

conda_env="covalent",

options={

"partition": "compute",

"cpus-per-task": 8

}

)

@ct.electron(executor=executor)

def my_custom_task(x, y):

return x + yCheck out the Slurm executer github repo for more details – https://github.com/AgnostiqHQ/covalent-slurm-plugin

✨ A new theme and revamped UI

To go along with these massive new backend changes and to be inclusive of all the hardware types Covalent users want to access, we have reworked the Covalent brand to reflect the truly diverse nature of the problems we are solving. Previously, a logo meant to indicate the connections made with “C” is now a logo of different shapes to demonstrate the variety of hardware/software/resource paradigms working in unison to generate results. What used to be futuristic with neon colors has now transitioned to a more pastel feel to indicate the immediate need for such a tool. We hope you all enjoy the new look as much as we do!

🩹 Known issues

Apart from the long documented issues in GitHub, some of the critical known issues in this release are:

- Performance degrades with the number of electrons. We recommend keeping the number of electrons under 50 for now. The underlying inefficiencies will be addressed in a future release.

- On rare occasions, the Covalent server returns HTTP 500 Internal Server Error when submitting a workflow, even while other workflows can be submitted. The underlying causes are being investigated. A temporary workaround is to restart the server (please wait until ongoing workflows are completed).

covalent statussometimes incorrectly reports that the Covalent server is running. The underlying bug has been identified and will be patched in a future release.- Errors in undetected modules arise with constructing leptons

- Path errors in required libraries (e.g. gcc) when constructing leptons

- Sublattice’s function strings show unredacted code

- Running

covalent --versionon Ubuntu 20.04 throws import errors - SSH plugin ****is not robust for sublattices and large workflows

- UI Graph spreads out the layout poorly after a critical number of electrons.

Contributors

This release would not have been possible without the hard work of the team at Agnostiq and our contributors: @AgnostiqHQ , @mshkanth, @Prasy12, @amalanpsiog, @Socrates